Recursive Epistemic Decay

Mar 24, 2026 · prototype

A picture says more than a thousand words, but how much does a picture regress when it is regenerated from exactly a thousand words, and then pushed through recursion again and again, and how quickly does it stop being about the original image and start being about the model’s own preferred defaults.

This was a small side test for another experiment, but it ended up being interesting enough to stand on its own.

The setup was simple and repetitive:

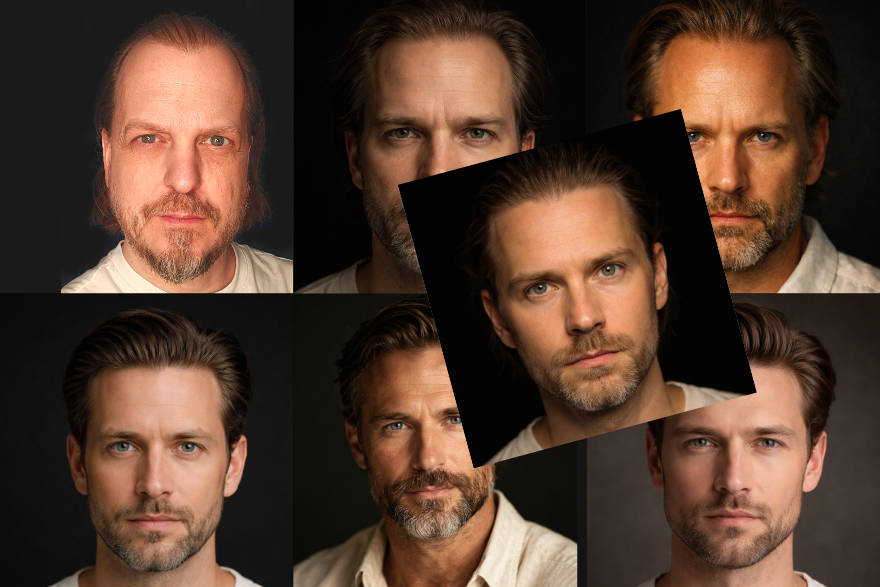

- take my LinkedIn profile image

- ask ChatGPT (Model 5.4, thinking mode) to describe it in exactly 1000 words

- open a fresh chat and generate a new image only from that 1000-word description

- open another fresh chat, describe that new image in 1000 words

- repeat

In total there are three generated images in this recursive chain.

1000 words

There is still a weak resemblance in the first generated image, especially around the eyes, but a lot of identity detail drops immediately, and the most interesting shift happens in the third and fourth frames (the two on the bottom), where describing generated output starts collapsing into a generic but attractive persona, and where the difference between iterations becomes smaller, like it is closing in on an equilibrium state.

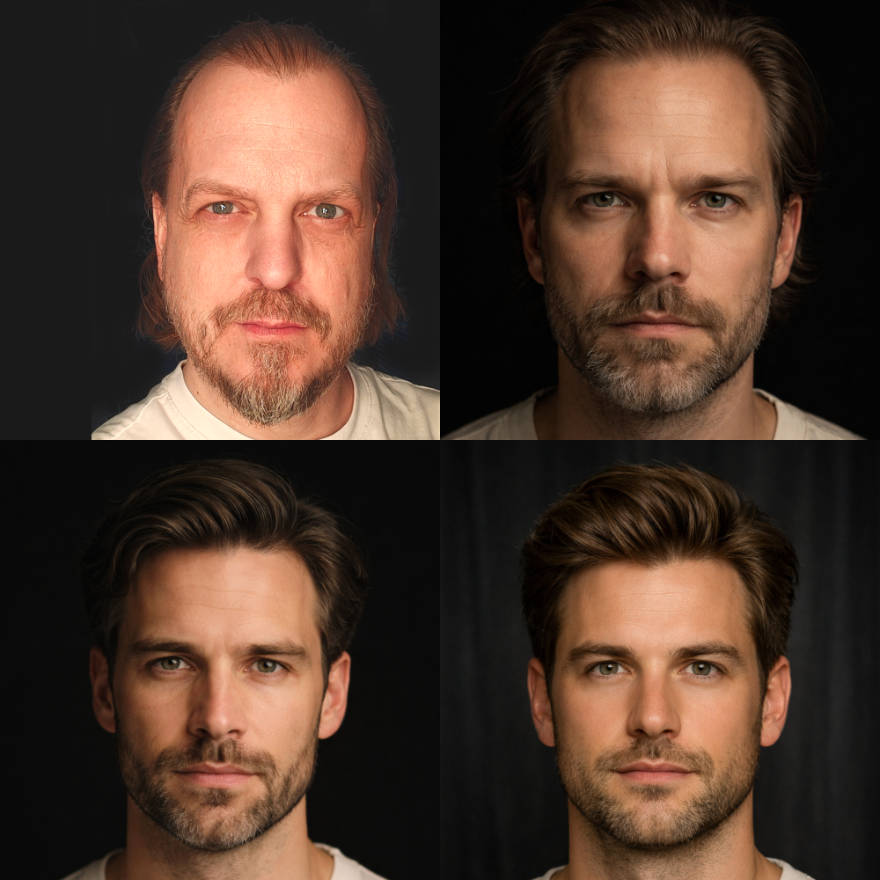

100 words

Cutting the description down to 100 words accelerates the decay of the original face, while still keeping the overall trajectory similar to the 1000-word run, just with weaker resemblance already in the first generated step.

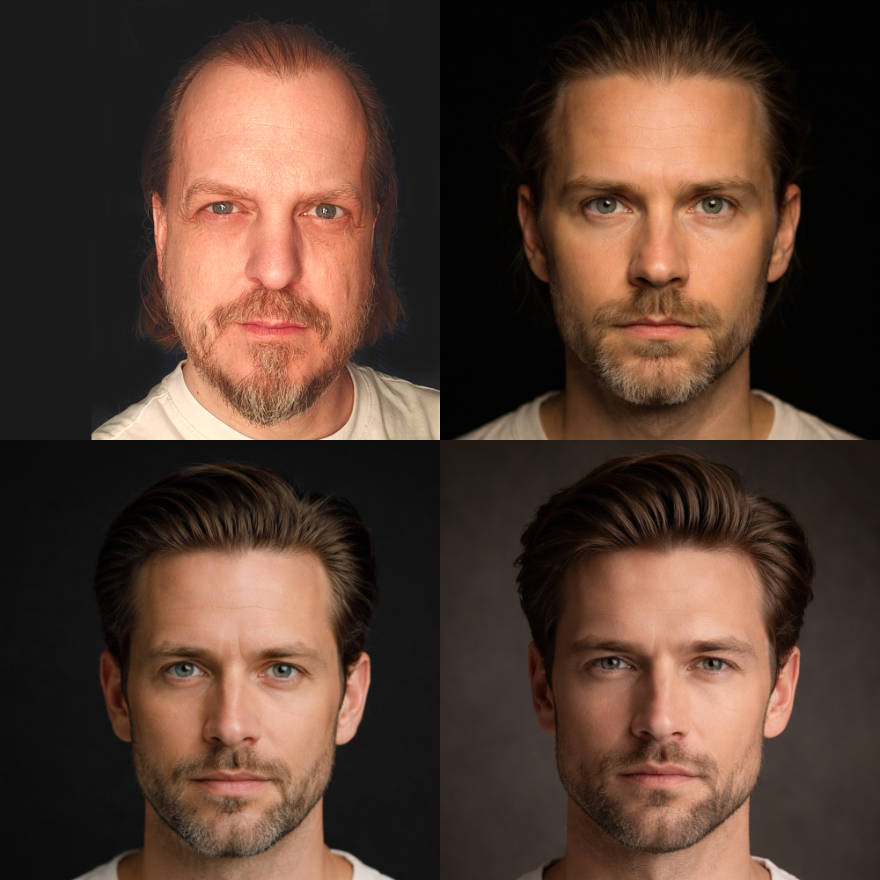

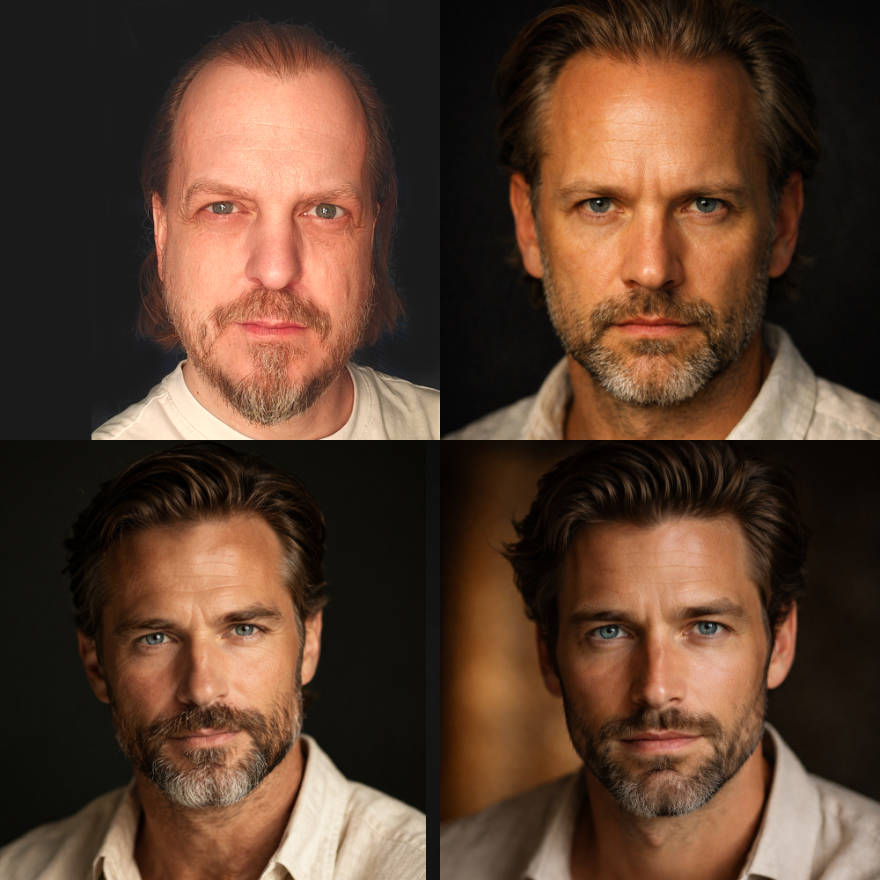

50 words

At 50 words I expected resemblance to disappear immediately, but there is still a weak trace in the first generated image, not enough to fool anyone in a suspect lineup, and the interesting part is that the generic persona is not the exact same one as before, still attractive, just older by what feels like 10 to 15 years, like the model changed archetype while keeping the same gravity.

What this was actually about

I like twisting systems until they produce something they were not explicitly built to produce.

Years ago I used to run national anthems through 20 to 30 rounds of language translation and then bring them back to the source language, mostly because it was absurd and funny, but also because repeated conversion reveals what survives compression and what gets replaced by defaults.

This experiment applies the same instinct to image models.

LLM and image-model hallucinations used to be loud; now they are less obvious, so this was a way to actively provoke them by constraining context length, while also stress-testing the old saying that an image says more than a thousand words.

What stood out is how quickly the outputs drift toward trained ideals when the loop starts feeding on its own products, and if that loop becomes common at scale, with models increasingly trained on assisted or generated content, then those ideals may get reinforced further no matter how large context windows become.